- Blog

- J cole immortal flp

- Watch hannah montana season 3 episode 31

- Pof app blocked by network

- Hearts of iron 4 steam code sale

- Csr 4-0 bluetooth driver vista

- Be quiet silent wings 3 review

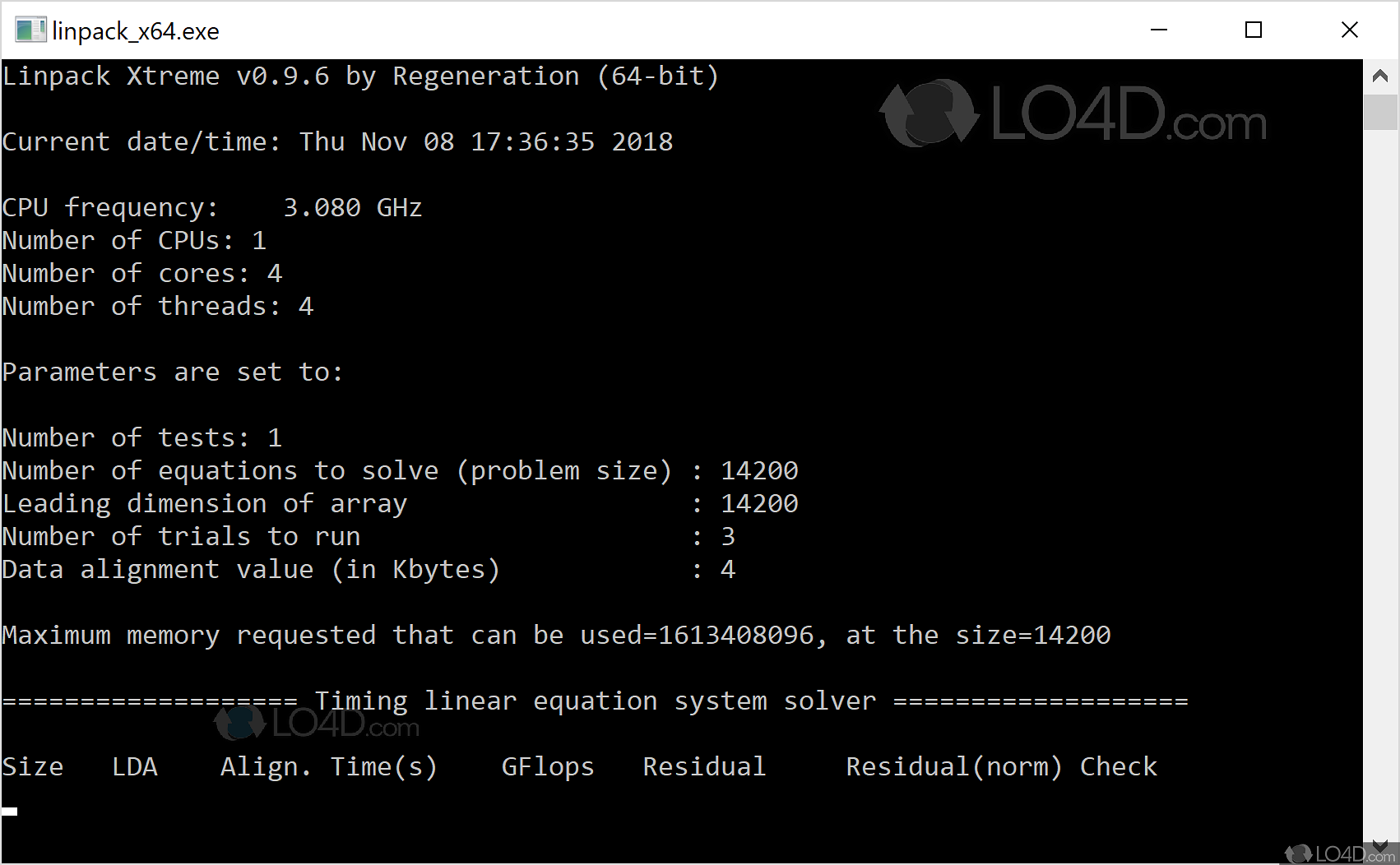

- Linpack benchmark cpu

- Autodesk hsmworks

- Download robotc for vex

- North arcot south arcot vadivelu dialogue

- Goat simulator goatz invitory

- Devon ke dev mahadev episode 43

- What is the great gatsby font

- Kenwood stereo control amplifier kc-105

- Limbo 2 box gravity

You can measure the average frequency from user space if the performance counters are enabled.

Linpack benchmark cpu code#

The latter approach is better, but can easily run into problems with dynamic frequency scaling if the interval is too short or if the core being monitored is not running "relevant" code during that interval. In Linux there are APIs for requesting information about the current frequency, but most of these are not reliable - either they report a frequency that was requested (but perhaps not actually provided), or they measure the actual cycles over a short interval and compute the corresponding average frequency. Unfortunately, the act of making a kernel call is very often enough to cause the frequency to change.

There is no way to directly obtain the CPU frequency from user-mode code - all of the registers that contain the information are only accessible from kernel mode. Maximum memory requested that can be used=16200901024, at the size=45000

Number of trials to run : 4 2 2 2 2 2 2 2 2 2 1 1 1 1 1ĭata alignment value (in Kbytes) : 4 4 4 4 4 4 4 4 4 4 4 1 1 1 1 Intel(R) Optimized LINPACK Benchmark dataĬurrent date/time: Wed Mar 15 08:18:39 2017 The correct number of CPUs/threads, problem input files, etc.

This is a SAMPLE run script for SMP LINPACK. While the MFLOP values look reasonable the "CPU FREQUENCY" reported by the test is very low & tends to support the belief that the server is running slowly, yet the linpack test results seem ok.ĭoes anyone know the CPU Frequency is determined & should we trust it ? If I were to reboot the server it'd report ~3.2GHz Model name : Intel(R) Xeon(R) CPU E5-2680 v3 2.50GHzįlags : fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush dts acpi mmx fxsr sse sse2 ss ht tm pbe syscall nx pdpe1gb rdtscp lm constant_tsc arch_perfmon pebs bts rep_good xtopology nonstop_tsc aperfmperf pni pclmulqdq dtes64 monitor ds_cpl vmx smx est tm2 ssse3 fma cx16 xtpr pdcm pcid dca sse4_1 sse4_2 x2apic movbe popcnt tsc_deadline_timer aes xsave avx f16c rdrand lahf_lm abm ida arat epb xsaveopt pln pts dtherm tpr_shadow vnmi flexpriority ept vpid fsgsbase bmi1 avx2 smep bmi2 erms invpcid cqm cqm_llc cqm_occup_llcĪddress sizes : 46 bits physical, 48 bits virtualĪ linpack run on the idle server gives us unexpected results. We're running the latest intel linpack on a server that is being reported as running slowly.